Syncing Toronto Recreation Schedules to Google Calendar

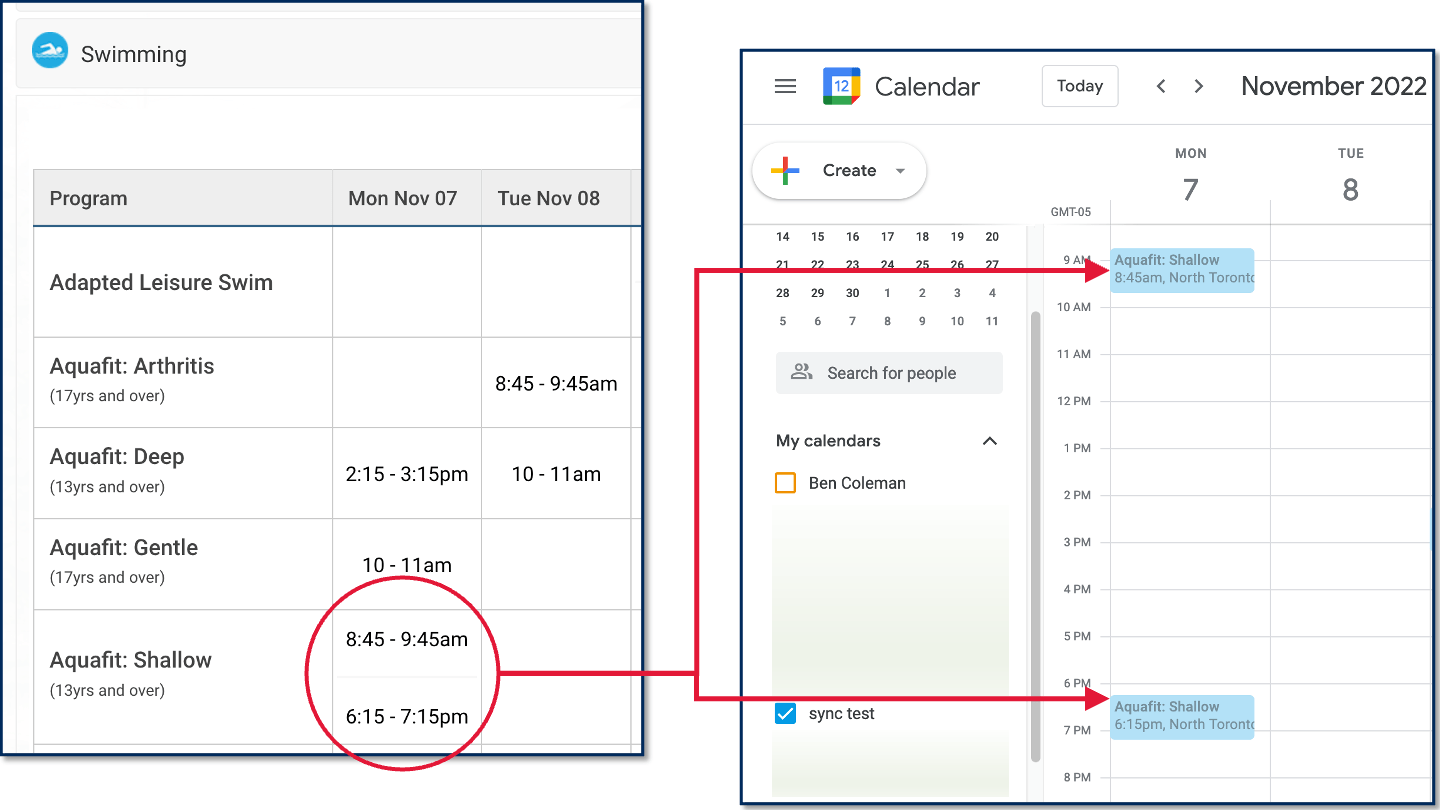

Toronto has a wealth of community centres and recreation facilities with great drop-in programs, but the only way to check their schedules electronically is to go to the webpage for your local centre.

Wouldn’t it be great to have the schedules for specific drop-in programs sync to your calendar instead? To solve this problem, I made a Google Apps Script that will sync drop-in schedules to your Google Calendar automatically.

Get the Script

You can get the code and instructions for the Toronto Recreation Schedule to Google Calendar script on Github.

In my case, I like to go swimming a couple times a week and wanted to be able to check the lane swim times for my local pool at a glance. However, you could use this script for any drop-in program schedule, whether it’s for arts programs, fitness, general interest programs, skating, sports, or swimming.

What if I don’t use Google Calendar?

If you don’t normally use Google Calendar, you can still use a Google account to set things up and then share the Google calendar with your regular account (e.g. with Outlook). The easiest method (if it works) is to find the “Share with specific people” section of your Google calendar settings and then add the email for your main calendar account. You should receive an email to your main account that gives you instructions or options for adding the calendar.

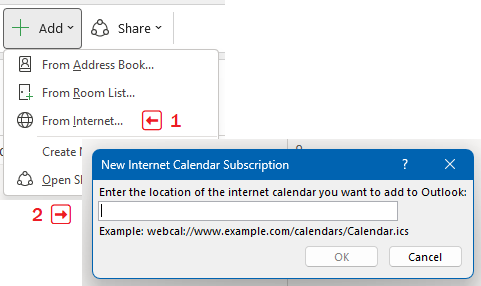

If that doesn’t work (e.g. if you’re using a work account that doesn’t let you add an external calendar the normal way), you can add the Google Calendar to your main calendar by going to the “Integration” section of your Google calendar settings and copy the “Secret address in iCal format”. You can use this private iCal address to add the calendar to your main app or account.

For example, in Outlook on desktop, you would go to “Add Calendar”, select “From Internet…” and then paste in your secret iCal feed address. There’s a similar option for Outlook on the web as well.

Could I do other web scraping tasks with Google Apps Script?

Possibly, but it would be pretty difficult for more complex pages that are hard to parse or that need to be loaded interactively (e.g. single-page applications). In this case for the City of Toronto website, the relevant info is relatively easy to pull from the page source using regular expressions. Another case where I’ve been able to use Google Apps Script is where the website was just loading a JSON file from a static URL — in that case, I just pulled the JSON data directly into the script.

If your use case is simple like this, there are several pros to using Google Apps Script: it’s free, easy to configure, comes with good documentation, integrates well with Google services, and you only have to know Javascript and some basic web development skills.

If you need to grab something more complicated, you’ll probably have to either pay for a tool like visualping.io or dig into some more advanced tools like Selenium (for headless browsing), Beautiful Soup (for better HTML parsing), and/or Scrapy (for building web crawlers).